Are you confused about choosing between an electrometer and a multimeter for your electrical measurements? You’re not alone.

Both tools look similar but serve very different purposes. Understanding which one suits your needs can save you time, money, and frustration. You’ll discover the key differences, benefits, and best uses of electrometers and multimeters. By the end, you’ll feel confident picking the right device for your projects and tasks.

Keep reading to unlock the secrets behind these essential measuring instruments!

Electrometer Basics

Understanding electrometers is key to knowing how they differ from multimeters. Electrometers measure electric charge and voltage with great sensitivity. They handle very small currents that multimeters cannot detect. This makes them essential in specific scientific and industrial fields.

Electrometers are precise tools designed for delicate measurements. Their design focuses on accuracy and minimal interference. Let’s explore the basics of electrometers to see what sets them apart.

What Is An Electrometer

An electrometer is an instrument that measures electric charge or voltage. It can detect currents as low as femtoamperes. This is much smaller than what a multimeter can measure. Electrometers use high input impedance to avoid affecting the circuit. They convert electric charge into a readable signal.

Common Uses Of Electrometers

Electrometers are widely used in physics and chemistry labs. They measure ionizing radiation and detect small electrical charges. These tools help in semiconductor testing and research. Environmental monitoring and material science also rely on electrometers. They provide accurate data where precision is crucial.

Key Features Of Electrometers

High sensitivity is the main feature of electrometers. They can measure tiny currents and voltages accurately. Electrometers have very high input resistance to prevent circuit loading. Their design minimizes electrical noise and interference. Many models offer digital displays for easy reading.

Multimeter Essentials

Understanding multimeters is key to choosing the right tool for electrical tasks. Multimeters measure different electrical properties and help diagnose problems in circuits. They are common tools for both beginners and professionals.

This section explains what a multimeter is, its typical uses, and its main features.

What Is A Multimeter

A multimeter is a handheld device that measures voltage, current, and resistance. It combines several testing functions into one tool. Multimeters come in two types: analog and digital. Digital multimeters are more popular due to accuracy and ease of use.

Typical Applications

Multimeters are used in many fields like electronics, automotive repair, and home wiring. They check batteries, fuses, and electrical outlets. Electricians use them to find faults in circuits. Hobbyists test small devices and circuits. They help ensure safety and proper function.

Core Features Of Multimeters

Most multimeters measure voltage, current, and resistance. They include a display screen for easy reading. Some have auto-ranging, which adjusts measurement settings automatically. Many models feature continuity testing with a sound alert. Safety features include fuses and overload protection. Portable design allows use in various environments.

Measurement Capabilities

Measurement capabilities define what each tool can do. Electrometers and multimeters serve different purposes in electrical testing. Understanding their measurement strengths helps choose the right device.

Voltage Measurement

Multimeters measure voltage in both AC and DC forms. They work well for general electrical tasks. Electrometers measure very low voltages with high precision. This makes them ideal for sensitive experiments or research.

Current Measurement

Multimeters measure current by direct contact in a circuit. They handle a wide range of currents from milliamps to amps. Electrometers measure extremely small currents, often in picoamps. This ability is crucial for detecting tiny electrical signals.

Resistance Measurement

Multimeters measure resistance to check circuit continuity or faults. They provide quick and easy resistance readings. Electrometers rarely measure resistance directly. Their focus lies more on low current and voltage detection.

Charge And Capacitance

Electrometers can measure electric charge and capacitance accurately. This suits advanced lab and physics applications. Multimeters usually cannot measure charge or have limited capacitance functions. Their design targets everyday electrical tasks.

Credit: twen.rs-online.com

Accuracy And Sensitivity

Accuracy and sensitivity are key factors in choosing between an electrometer and a multimeter. These qualities affect how well each device measures electrical signals. Understanding their differences helps in selecting the right tool for your needs.

Precision Levels

Electrometers offer very high precision. They measure electrical charges with exact detail. Multimeters provide good precision, but not as fine as electrometers. This makes electrometers ideal for tasks needing exact readings.

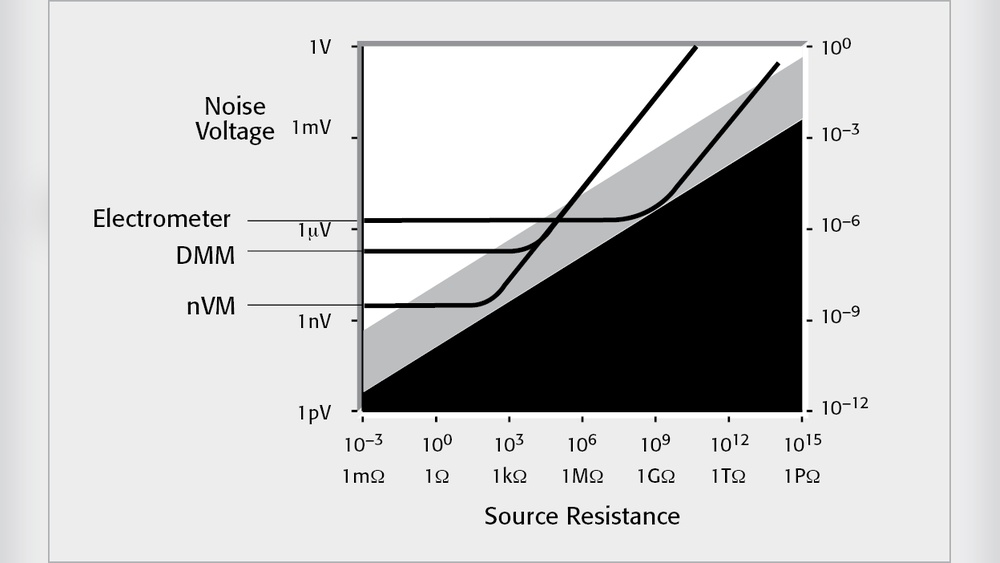

Sensitivity To Low Currents

Electrometers detect extremely low currents, even in the femtoamp range. Multimeters usually measure higher currents and may miss tiny signals. This sensitivity makes electrometers suitable for delicate experiments and research.

Noise And Interference

Electrometers have advanced designs to reduce noise and interference. This results in clear, stable readings. Multimeters can be affected by electrical noise more easily. Noise can cause errors in measurements, especially at low levels.

Design And Build

Design and build play a key role in choosing between an electrometer and a multimeter. Each device serves different purposes, so their designs reflect that. Understanding their size, display, and power needs helps select the right tool.

Size And Portability

Electrometers are often larger and heavier than multimeters. They need extra shielding to measure very small currents accurately. Multimeters come in compact, handheld models. They are easy to carry for everyday tasks. Portability favors multimeters for fieldwork and quick checks.

Display And Interface

Multimeters usually have simple digital displays. They show numbers clearly and some include backlights. Buttons and dials let users select measurement types easily. Electrometers have more complex displays. They show detailed data and may include graphs. Their interface can be harder for beginners.

Power Requirements

Multimeters run on standard batteries like AA or 9V cells. This makes them convenient and quick to power up. Electrometers often need stable, external power sources. Some models require special batteries or adapters. This is to maintain sensitive measurement accuracy.

Applications Compared

Understanding the applications of electrometers and multimeters helps choose the right tool. Both measure electrical properties but serve different purposes. Their unique features suit various environments and tasks.

Laboratory Use

Electrometers excel in labs needing very low current or voltage measurement. They detect tiny electrical charges with high precision. Multimeters measure voltage, current, and resistance but with less sensitivity. Labs use multimeters for general checks and electrometers for detailed research.

Field Work

Multimeters are common in field work for quick and robust testing. They handle rough conditions and measure multiple parameters easily. Electrometers are less common outdoors due to sensitivity and fragility. Field technicians prefer multimeters for speed and durability.

Educational Settings

Schools and colleges use multimeters for teaching basic electrical concepts. They are simple, safe, and versatile for students. Electrometers appear in advanced courses focused on physics or electronics research. Both tools help students understand electricity at different levels.

Industrial Applications

Industries rely on multimeters for routine maintenance and troubleshooting. They check circuits, batteries, and motors efficiently. Electrometers find use in specialized tasks like measuring insulation or tiny currents. Industrial settings choose tools based on measurement needs and conditions.

Cost And Availability

Cost and availability are key factors in choosing between an electrometer and a multimeter. Both tools serve important roles in electrical measurements but differ widely in price and market presence. Understanding these differences helps buyers make informed decisions.

Price Range Differences

Multimeters are generally affordable. Basic models start at low prices, suitable for everyday use. Advanced multimeters cost more but remain reasonable for most users. Electrometers, on the other hand, are expensive. They use specialized parts and precise technology. Prices can be several times higher than multimeters. This cost reflects their high sensitivity and accuracy.

Market Availability

Multimeters are widely available in stores and online. Most hardware shops stock them. Electrometers are less common. They are usually found through specialized suppliers. Buying an electrometer may require contacting manufacturers or distributors. This limited availability can affect how quickly you get the tool.

Maintenance And Calibration

Multimeters need little maintenance. Calibration is simple and often user-friendly. Electrometers require careful maintenance. Calibration must be precise to keep accuracy. It often involves professional service. This adds to the overall cost and effort of owning an electrometer.

Credit: powertech.com.bd

Choosing The Right Tool

Choosing the right tool between an electrometer and a multimeter matters a lot. Each device has its own strengths. Picking the correct one can save time and improve accuracy. Understanding your needs helps make the best choice.

Assessing Your Needs

First, think about the type of measurements you require. Electrometers measure very small electrical charges and voltages. Multimeters handle voltage, current, and resistance in everyday circuits. If your work involves sensitive, low-level signals, an electrometer fits better. For general electrical tasks, a multimeter is usually enough.

Long-term Investment

Consider how often you will use the tool. Electrometers cost more and need careful handling. Multimeters are cheaper and more durable. If you need a device for many projects over time, investing in a reliable multimeter may be wiser. For rare, precise measurements, spending on an electrometer can be worth it.

Compatibility With Projects

Match the tool to your project type. Electronics repair and household wiring require a multimeter. Scientific research or high-tech labs often need an electrometer. Check if your project needs high sensitivity or just basic readings. This ensures you choose a tool that works well without extra features you won’t use.

Credit: en.wikipedia.org

Frequently Asked Questions

What Is The Main Difference Between Electrometer And Multimeter?

An electrometer measures very low electrical charges with high precision. A multimeter measures voltage, current, and resistance for general use. Electrometers are more sensitive, used in specialized fields, while multimeters are versatile tools for everyday electrical testing.

When Should I Use An Electrometer Over A Multimeter?

Use an electrometer when measuring extremely low currents or charges. It is ideal for sensitive experiments and research. Multimeters are better for standard electrical measurements in circuits and devices. Electrometers provide higher accuracy in detecting minimal electrical signals.

Can A Multimeter Measure Charge Like An Electrometer?

No, a multimeter cannot accurately measure electrical charge. It measures voltage, current, and resistance but lacks the sensitivity for low charge detection. Electrometers are designed specifically for precise charge and low current measurements, which multimeters cannot perform effectively.

Are Electrometers More Expensive Than Multimeters?

Yes, electrometers are generally more expensive due to their advanced sensitivity and precision. They require specialized components and calibration. Multimeters are affordable, widely available, and suitable for common electrical tasks, making them cost-effective for general use.

Conclusion

Electrometers and multimeters serve different purposes in measurements. Electrometers measure tiny electrical charges with high precision. Multimeters check voltage, current, and resistance easily. Choose an electrometer for very sensitive tasks. Pick a multimeter for general electrical work. Both tools help understand electrical properties clearly.

Knowing their differences improves your tool choice. Use the right device to get accurate results. This makes your work safer and more efficient. Simple tools, clear results.

I’m Asif Ur Rahman Adib, an Electrical Engineer and lecturer. My journey began in the lab, watching students struggle with instruments they used every day without fully understanding them. Over time, I’ve combined teaching, research, and hands-on experience to help others grasp electrical concepts clearly, safely, and practically—whether it’s understanding a circuit or mastering a multimeter.